|

carla_vloc_benchmark repositorycarla_visual_navigation carla_visual_navigation_agent carla_visual_navigation_interfaces |

|

|

Repository Summary

| Description | Official implementation of the WACV 2023 paper "Benchmarking Visual Localization for Autonomous Navigation". |

| Checkout URI | https://github.com/lasuomela/carla_vloc_benchmark.git |

| VCS Type | git |

| VCS Version | main |

| Last Updated | 2023-09-25 |

| Dev Status | UNMAINTAINED |

| CI status | No Continuous Integration |

| Released | UNRELEASED |

| Tags | No category tags. |

| Contributing |

Help Wanted (0)

Good First Issues (0) Pull Requests to Review (0) |

Packages

| Name | Version |

|---|---|

| carla_visual_navigation | 0.0.0 |

| carla_visual_navigation_agent | 0.0.0 |

| carla_visual_navigation_interfaces | 0.0.0 |

README

</a>

</a>

Carla Visual localization benchmark

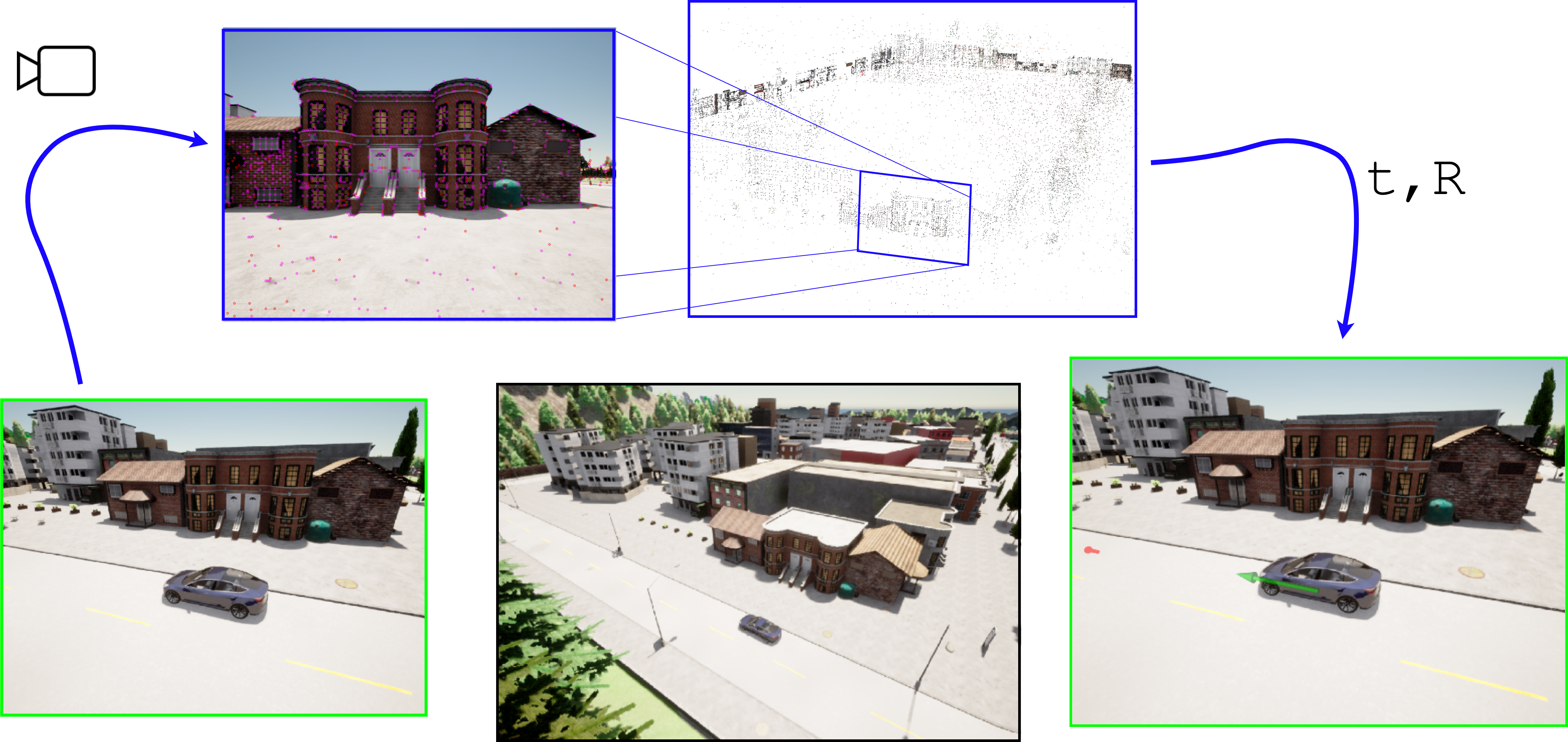

This is the official implementation of the paper “Benchmarking Visual Localization for Autonomous Navigation”.

The benchmark enables easy experimentation with different visual localization methods as part of a navigation stack. The platform enables investigating how various factors such as illumination, viewpoint, and weather changes affect visual localization and subsequent navigation performance. The benchmark is based on the Carla autonomous driving simulator and our ROS2 port of the Hloc visual localization toolbox.

Citing

If you find the benchmark useful in your research, please cite our work as:

@InProceedings{Suomela_2023_WACV,

author = {Suomela, Lauri and Kalliola, Jussi and Dag, Atakan and Edelman, Harry and Kämäräinen, Joni-Kristian},

title = {Benchmarking Visual Localization for Autonomous Navigation},

booktitle = {Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision (WACV)},

month = {January},

year = {2023},

pages = {2945-2955}

}

System requirements

- Docker

- Nvidia-docker

- Nvidia GPU with minimum of 12GB memory. Recommended Nvidia RTX3090

- 70GB disk space for Docker images

Installation

The code is tested on Ubuntu 20.04 with one Nvidia RTX3090. As everything runs inside docker containers, supporting platforms other than linux should only require modifying the build and run scripts in the docker folder.

Pull the repository:

git clone https://github.com/lasuomela/carla_vloc_benchmark/

git submodule update --init --recursive

We strongly recommend running the benchmark inside the provided docker images. Build the images:

cd docker

./build-carla.sh

./build-ros-bridge-scenario.sh

Next, validate that the environment is correctly set up. Launch the Carla simulator:

# With GUI

./run-carla.sh

In another terminal window, start the autonomous agent / scenario runner container:

cd docker

./run-ros-bridge-scenario.sh

Now, inside the container terminal:

cd /opt/visual_robot_localization/src/visual_robot_localization/utils

# Run SfM with the example images included with the visual_robot_localization package.

./do_SfM.sh

# Visualize the resulting model

./visualize_colmap.sh

# Test that the package can localize agains the model

launch_test /opt/visual_robot_localization/src/visual_robot_localization/test/visual_pose_estimator_test.launch.py

If all functions correctly, you are good to go.

Reproduce the paper experiments

### 1. Launch the environment In one terminal window, launch the Carla simulator: ```sh cd docker # Headless ./run-bash-carla.sh ``` In another terminal window, launch a container which contains the autonomous agent and scenario execution logic: ```sh cd docker ./run-ros-bridge-scenario.sh ``` The run command mounts the `/carla_visual_navigation`, `/image-gallery`, `/scenarios` and `/results` folders into the container, so the changes to these folders are reflected both inside the container and on the host system. ### 2. Capture the gallery datasets When running for the first time, you need to capture the images from the test route for 3D reconstruction. Inside the container terminal from last step: ```sh cd /opt/carla_vloc_benchmark/src/carla_visual_navigation/scripts # Generate the scenario files describing gallery image capture and experiments # See /scenarios/experiment_descriptions.yml for list of experiments python template_scenario_creator.py # Launch terminal multiplexer tmux # Create a second tmux window into the container using 'shift-ctrl-b' + '%' # Tmux window 1: start the scenario runner with rviz GUI ros2 launch carla_visual_navigation rviz_scenario_runner.launch.py town:='Town01' # Tmux window 2: start gallery capture execution ros2 launch carla_visual_navigation scenario_executor.launch.py scenario_dir:='/scenarios/gallery_capture_town01_route1' repetitions:=1 ``` If everything went okay, you should now have a folder `/image-gallery/town01_route1` with images captured along the route. Repeat for Town10: ```sh # Tmux window 1 ros2 launch carla_visual_navigation rviz_scenario_runner.launch.py town:='Town10HD' # Tmux window 2 ros2 launch carla_visual_navigation scenario_executor.launch.py scenario_dir:='/scenarios/town10_route1' repetitions:=1 ``` ### 3. Run 3D reconstruction for the gallery images Next, we triangulate sparse 3D models from the gallery images and their camera poses. The models are saved in the respective image capture folders in `/image-gallery/` ```sh cd /opt/carla_vloc_benchmark/src/carla_visual_navigation/scripts # Check the visual localization methods used in the experiments and run 3D reconstruction with each of them python create_gallery_reconstructions.py --experiment_path '/scenarios/illumination_experiment_town01' # Repeat for Town10 python create_gallery_reconstructions.py --experiment_path '/scenarios/illumination_experiment_town10' ``` You can visually examine the reconstructions with ```sh cd /opt/visual_robot_localization/src/visual_robot_localization/utils ./visualize_colmap.sh --image_folder '/image-gallery/town01_route1' --localization_combination_name 'netvlad+superpoint_aachen+superglue' ``` ### 4. Run the illumination experiments To replicate the experiment results: ```sh # Launch terminal multiplexer tmux # Create a second tmux window into the container using 'shift-ctrl-b' + '%' # In the first window, start the scenario runner headless (the rviz GUI slows the simulation down) ros2 launch carla_visual_navigation cli_scenario_runner.launch.py town:='Town01' # In the second window, start scenario execution for Town01, with 5 repetitions of each scenario file ros2 launch carla_visual_navigation scenario_executor.launch.py scenario_dir:='/scenarios/illumination_experiment_town01' repetitions:=5 # Once all the scenarios have been finished, run the scenarios with autopilot to measure visual localization recall ros2 launch carla_visual_navigation scenario_executor.launch.py scenario_dir:='/scenarios/illumination_experiment_town01_autopilot' repetitions:=1 # Next, measure navigation performance with wheel odometry only ros2 launch carla_visual_navigation scenario_executor.launch.py scenario_dir:='/scenarios/illumination_experiment_town01_odometry_only' repetitions:=5 ``` Completing the experiments can take a long time. Once the experiments have been completed, repeat for the Town10 envrionment. The results are saved to `/results/`. ### 5. Run the viewpoint change experiments Viewpoint change -experiments uses the same gallery images and sparse 3D models as in the illumination experiments. Each viewpoint change (e.g., change in camera angle) has its own experiment description in `scenarios/experiment_descriptions.yml` and sensor configuration file in `carla_vloc_benchmark/carla_visual_navigation/config/viewpoint_experiment_objects/`. Run an experiment with the following camera position: `z=4.0, pitch=10.0` ```sh # Tmux window 1 ros2 launch carla_visual_navigation cli_scenario_runner.launch.py town:='Town01' objects_config:='/opt/carla_vloc_benchmark/src/carla_visual_navigation/config/viewpoint_experiment_objects/zpitch/objects_zpitch1.json' # In Tmux window 2, start scenario execution for Town01 and 5 repetitions of each scenario file ros2 launch carla_visual_navigation scenario_executor.launch.py scenario_dir:='/scenarios/viewpoint_experiment_zpitch1_town01' repetitions:=5 # Once all the scenarios have been finished, run the scenarios with autopilot to measure visual localization recall # Remember to define the same sensor configuration file as previously # Tmux window 1 ros2 launch carla_visual_navigation cli_scenario_runner.launch.py town:='Town01' objects_config:='/opt/carla_vloc_benchmark/src/carla_visual_navigation/config/viewpoint_experiment_objects/zpitch/objects_zpitch1.json' # Tmux window 2 ros2 launch carla_visual_navigation scenario_executor.launch.py scenario_dir:='/scenarios/viewpoint_experiment_zpitch1_town01_autopilot' repetitions:=1 # If Illumination experiments are performed, then there is no need to measure navigation performance with wheel odometry only. Same results can be used for viewpoint experiments # Otherwise, measure navigation performance with wheel odometry only ros2 launch carla_visual_navigation scenario_executor.launch.py scenario_dir:='/scenarios/illumination_experiment_town01_odometry_only' repetitions:=5 ``` Repeat the previous commands for other camera positions _(zpitch2, zpitch3, zpitch4, ...)_ and viewpoint changes _(roll, yaw)_ The results are saved to `/results/`.List of the sensor configurations

| | Filename | Pitch | Z | |-----------------------|-------|------| | objects_zpitch1.json | 10.0 | 4.0 | | objects_zpitch2.json | 22.5 | 6.0 | | objects_zpitch3.json | 27.5 | 7.0 | | objects_zpitch4.json | 32.5 | 8.0 | | objects_zpitch5.json | 35.0 | 9.0 | | objects_zpitch6.json | 37.5 | 10.0 | | objects_zpitch7.json | 40.0 | 11.0 | | objects_zpitch8.json | 40.0 | 12.0 | | objects_zpitch9.json | 40.0 | 13.0 | | objects_zpitch10.json | 40.0 | 15.0 | | objects_zpitch11.json | 40.0 | 17.0 | | objects_zpitch12.json | 40.0 | 18.0 | | | Filename | Yaw | |-------------------|-------| | objects_yaw1.json | 90.0 | | objects_yaw2.json | 67.5 | | objects_yaw3.json | 45.0 | | objects_yaw4.json | 22.5 | | objects_yaw5.json | -22.5 | | objects_yaw6.json | -45.0 | | objects_yaw7.json | -67.5 | | objects_yaw8.json | -90.0 | |